Your Permissions Model Was Not Built for AI Agents

- Ajit Gupta

- Mar 31

- 4 min read

Updated: 6 days ago

The EU AI Act is not the only regulation your enterprise needs to think about. But it is the clearest signal that the governance gap most organisations have quietly tolerated is about to become a measurable compliance liability.

This is not a policy problem. It is an architectural one.

The Governance Gap Nobody Talks About

Most enterprises deploying AI agents rely on the same access control infrastructure they have used for a decade. RBAC. ABAC. API gateways. Firewall rules.

These tools answer one question well: is this system technically permitted to access this resource?

They do not answer the question regulators are now asking: Was this agent action consistent with the business purpose it was authorised for — in this session, given live context, right now?

The distinction matters because AI agents do not behave like static software. They reason dynamically, traverse systems, and execute sequences of actions that no predefined permission set fully anticipates. A conventional access control layer sees a technically permitted API call. It cannot evaluate whether that call was aligned with the task the agent was authorised to perform.

That gap is invisible until it causes an incident — or until a regulator asks for evidence that it did not.

What the Regulations Actually Require

The EU AI Act is the most prescriptive framework currently in force. For high-risk AI systems — which includes agents operating in financial services, healthcare, insurance, legal, and critical infrastructure — three obligations are particularly difficult to meet with conventional controls.

Article 9 requires a risk management system running continuously throughout the AI system's lifecycle, scoped to its intended purpose. Not a one-time deployment review. An ongoing process that tracks whether the system operates within the boundaries it was authorised for.

Article 13 requires explainability. Not just a log of what the agent did, but a demonstrable basis for why each action was permitted. An audit trail of API calls does not answer this question. Regulators will ask whether the action was within scope. You need to be able to show the scope was defined, evaluated, and enforced before execution.

Article 14 requires human oversight mechanisms. The Act does not require a human to approve every action — that is operationally impossible at scale and the Act recognises this — but it does require a governance layer that evaluates intent and context at runtime and produces a tamper-evident record of every decision.

High-risk AI system obligations apply in full from August 2027.

That is closer than it appears for enterprises that need to instrument governance across production agent deployments at scale.

The EU Is Not Alone

The same pattern is emerging across multiple jurisdictions simultaneously. Each with its own emphasis, but converging on the same structural requirement.

South Korea's AI Basic Act took effect in January 2026. It uses a risk-based approach and applies to any organisation whose AI activities affect South Korea — including foreign operators without a domestic presence.

Singapore's MAS Technology Risk Management guidelines already require explainability and auditability of AI-driven decisions. More specifically, Singapore's Government Technology Agency published an Agentic AI Primer in 2025, identifying autonomous AI governance as a distinct architectural problem

requiring auditable autonomy, human oversight, and adaptive safeguards.

This is the most precise statement any regulator has yet made about what agentic AI governance actually demands technically.

The UK's FCA expects firms to demonstrate that AI systems operate within defined parameters. That expectation is live now, not contingent on 2027 deadlines.

Taiwan's AI Basic Act took effect in January 2026. Canada, Brazil, and India are all progressing risk-based frameworks modelled partly on the EU approach.

The direction of travel is consistent:

AI agents must be governed by purpose, not just by permission. The jurisdictions differ on timing and mechanism. They agree on the underlying requirement.

The Architectural Requirement

Meeting these obligations requires four capabilities that static access controls do not provide.

First, a validated persistent declaration of what each AI system is authorised to do. Not a list of permitted API endpoints — an enforceable statement of business purpose, stored in a registry with a unique identifier, that defines the outer boundary of every session and every action.

Second, per-session scoping. Before execution begins, a governed declaration of what the agent intends to accomplish in this run, evaluated for alignment with that system purpose. This is the mechanism that makes Article 9's ongoing risk management tractable — scope is defined and validated at the session level, not inferred after the fact.

Third, runtime action monitoring. Every action assessed at the point of execution against declared intent, with allow, block, modify, or escalate decisions. Not pattern-matched against static rules — evaluated against the purpose and intent context of this session.

Fourth, an audit trail that reconstructs not just what happened, but why it was permitted — in a form that satisfies risk, compliance, and regulatory requirements. This is what makes Article 13 answerable. The record exists because governance was enforced, not because logs were collected.

None of this requires replacing existing IAM infrastructure. It requires a governance layer that operates above it — intercepting agent actions before execution, not reviewing logs after.

The Question Worth Asking Now

If a regulator asked you today — was that agent action within its authorised purpose for that session — could you answer it with evidence?

If the answer is uncertain, the gap is architectural. And the window to close it before enforcement pressure arrives is narrowing across every major jurisdiction simultaneously.

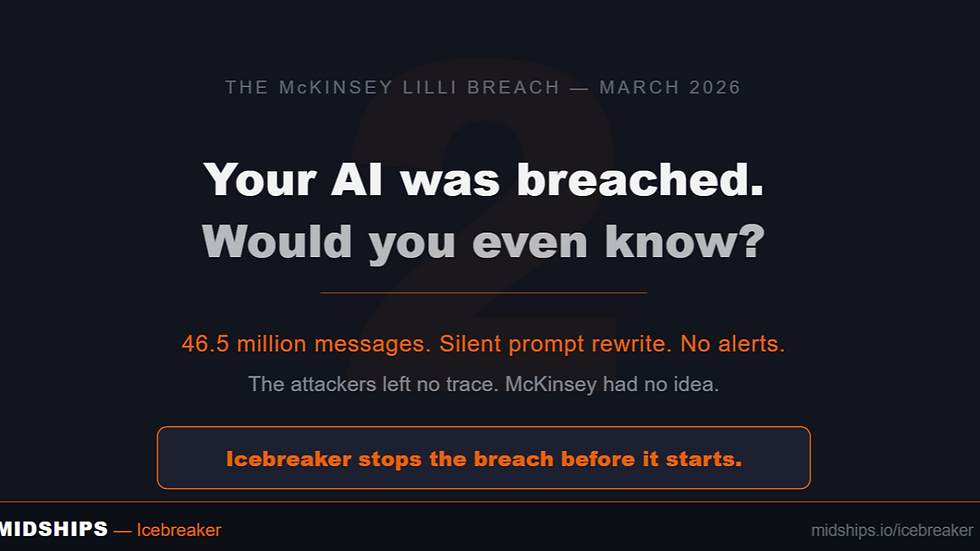

At Midships, we built Icebreaker to address this directly. It is the control plane for governed AI execution — sitting between AI agents and the systems they act on, enforcing business purpose at runtime, and producing the governance record that compliance requires.

No replatforming. No IAM replacement.

Writer’s Overview

Ajit Gupta – Co-Founder & CEO, Midships

Ajit leads Midships Group’s transition from a specialist identity consultancy to a portfolio of autonomous, AI-native business units. He focuses on long-term business relevance through platform thinking, customer outcomes, and scalable operating models.

Short bio: Ajit is a strategic founder with deep expertise in IAM, platform delivery, and AI services, driving Midships’ expansion across Asia, the Middle East, and beyond.

Comments