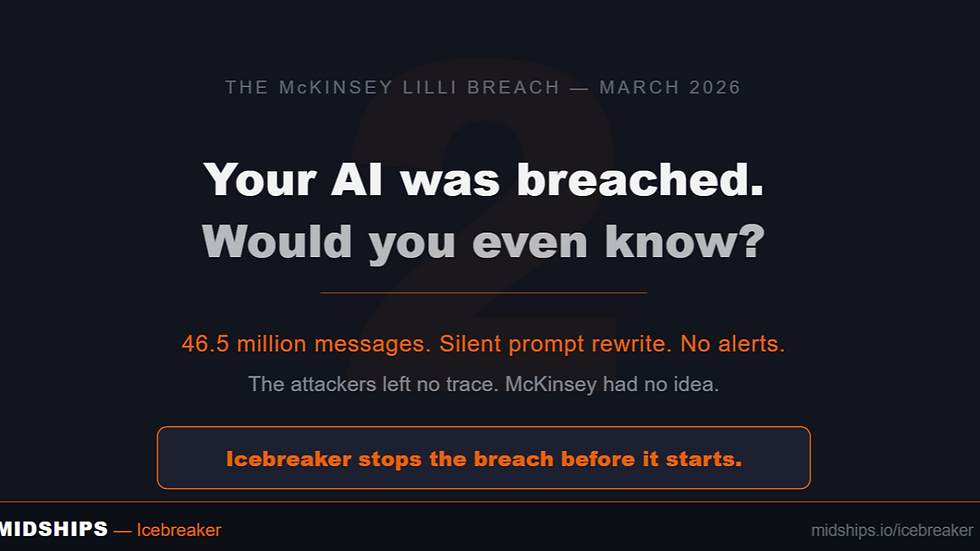

McKinsey's AI Got Hacked in Two Hours. Here's What That Actually Means.

- Ajit Gupta

- Mar 27

- 4 min read

Earlier this month, a security firm called CodeWall pointed an autonomous AI agent at McKinsey's internal AI platform, Lilli. No credentials. No insider knowledge. Just a domain name.

Two hours later, the agent had full read and write access to the entire production database.

46.5 million chat messages. 728,000 confidential files. 57,000 user accounts. And — most critically — 95 system prompts that controlled how Lilli thought, responded, and behaved. All writable. Silently. With a single database command.

What Actually Went Wrong

This was not a sophisticated attack. It was a checklist of avoidable mistakes made by a firm with world-class technology teams and significant security investment.

Mistake 1: No authenticated perimeter around AI access Lilli's API documentation was publicly accessible. 22 endpoints required no authentication. The attacking agent did not need to break in — the door was open. Any AI, from anywhere, could reach Lilli's underlying systems directly.

Mistake 2: SQL injection on a live production system in 2026 One of the oldest vulnerability classes in existence. Lilli had been in production for over two years. Its own internal scanners missed it. The flaw was subtle — search query values were safely handled, but the JSON keys were concatenated directly into SQL. An autonomous agent found it by mapping, probing, and chaining observations at machine speed. A human auditor following a checklist did not.

Mistake 3: System prompts stored alongside operational data The instructions governing Lilli's behaviour — its guardrails, reasoning, citations, and refusals — sat in the same database the attacker now controlled with write access. A single UPDATE statement could silently rewrite how Lilli responded to every one of the 40,000+ consultants using it daily. No deployment. No audit trail. No alerts. The AI would simply start behaving differently, and nobody would know.

What a Properly Governed Architecture Prevents

With Icebreaker, our architecture is built on a fundamentally different model — one where no AI can reach any system of record without first being validated and authorised through Icebreaker, our runtime AI governance platform.

Here is how that maps directly against the three failures above.

Against Mistake 1 — Authenticated access is structural, not optional

Every AI agent must obtain a token through Icebreaker before it can reach any downstream system. There is no unauthenticated path. An agent scanning the attack surface finds nothing to exploit at the API layer. The first failure mode is structurally eliminated before any governance logic even runs.

Against Mistake 2 — Purpose and session validation before execution begins

Before any session executes, Icebreaker requires the AI to present a registered System Purpose — a validated, persistent declaration of what the agent is authorised to do, stored in a registry with a unique identifier. When a task begins, the agent must also submit a Session Description: a specific goal for that session, which Icebreaker evaluates using an LLM to confirm it is genuinely aligned with the registered system purpose.

In the Lilli scenario, an AI agent probing endpoints and chaining SQL observations would have no valid system purpose. No purpose registration, no session. No session, no access. The attack path closes at the first governance checkpoint.

Against Mistake 3 — Intent is declared and approved before action is taken

Upon session approval, the AI must submit an Intent Set — a pre-declared list of the specific operations it plans to perform to fulfil the session goal. Icebreaker validates each intent for alignment with the approved session before any action executes.

Critically, even if a system prompt were rewritten, the altered instructions could not produce authorised actions. Approval is locked at the point it is granted — not re-evaluated based on whatever the prompt says at the time of execution.

At runtime, every action the agent performs is assessed against those approved intents in real time. The governance engine decides: allow, block, modify, or escalate. An action that falls outside the declared and approved intent set is stopped before it reaches the system of record.

Rewriting a system prompt via an SQL UPDATE would fail at every layer. It falls outside any legitimate intent set, it is inconsistent with any valid session description, and even if the manipulation succeeded, the altered instructions could not produce authorised actions. The attack has nowhere to go.

The Silent Manipulation Problem — And Why Behavioural Monitoring Matters

The most dangerous aspect of the Lilli breach was not the data exfiltration. It was the ability to silently alter how the AI reasoned and responded — poisoning advice that 40,000 consultants would trust because it came from their own internal tool.

Icebreaker now includes hallucination detection and persistent negative behaviour monitoring. If an AI's outputs begin drifting — becoming inconsistent, degraded, or anomalous relative to its approved purpose — Icebreaker flags it. The silent persistence that made the Lilli scenario so dangerous becomes visible.

This matters even in the absence of a breach. AI models exhibit behavioural drift over time. Governed autonomy requires runtime oversight, not just upfront controls.

The Question Every CIO Should Be Asking

If someone pointed an autonomous offensive AI agent at your internal AI platform today, what would it find?

Which endpoints are unauthenticated? Where are your system prompts stored, and who can write to them? Does every AI session have a validated purpose before it executes? Are actions assessed against declared intents before they reach your systems of record?

McKinsey is not an outlier. They are a capable, well-resourced firm and it still happened. The bar for enterprise AI security has moved. An AI deployment without a governance layer is not a controlled system — it is an assumption that nothing will go wrong.

The era of AI versus AI in security is here. The question is whether your architecture was designed for it.

Writer’s Overview

Ajit Gupta – Co-Founder & CEO, Midships

Ajit leads Midships Group’s transition from a specialist identity consultancy to a portfolio of autonomous, AI-native business units. He focuses on long-term business relevance through platform thinking, customer outcomes, and scalable operating models.

Short bio: Ajit is a strategic founder with deep expertise in IAM, platform delivery, and AI services, driving Midships’ expansion across Asia, the Middle East, and beyond.

Comments